Listen to the article

In a digital landscape dominated by algorithms, social media platforms are increasingly deploying artificial intelligence and machine learning tools to manage content and advertising—often with unintended consequences for information integrity.

These sophisticated AI systems, primarily designed to maximize user engagement, are inadvertently amplifying problematic content across platforms. Industry experts warn that the algorithms powering content moderation, ad targeting, and recommendation systems frequently boost sensationalist material, including misinformation and disinformation, simply because such content drives user interaction.

The issue lies at the intersection of technology and business incentives. Social media companies rely on engagement metrics to support their advertising-driven revenue models, creating a troubling dynamic where content that provokes strong reactions—regardless of accuracy—receives algorithmic preference.

“What we’re seeing is a perfect storm of technological capability and profit motivation,” explains Dr. Maya Hernandez, a digital ethics researcher at Stanford University, who was not involved in the report. “These systems are extraordinarily efficient at what they do, but they’re optimized for engagement rather than information quality.”

The problem has drawn attention from lawmakers on both sides of the Atlantic. In the European Union, the Digital Services Act introduces new transparency requirements for platforms regarding their recommendation systems and content moderation practices. The legislation represents the most comprehensive regulatory framework addressing algorithmic amplification to date.

Meanwhile, in the United States, several legislative proposals aim to address different aspects of the problem. The Platform Accountability and Transparency Act would grant qualified researchers access to platform data, while the Algorithmic Justice and Online Platform Transparency Act focuses on discriminatory algorithmic processes. However, comprehensive legislation addressing algorithmic amplification of misinformation remains elusive in the U.S.

Technology experts suggest multiple pathways toward improvement. Platforms could significantly enhance transparency around their policies and algorithmic systems, allowing users and researchers to better understand how and why certain content spreads. This includes providing clear explanations of moderation decisions and publishing regular impact assessments of recommendation systems.

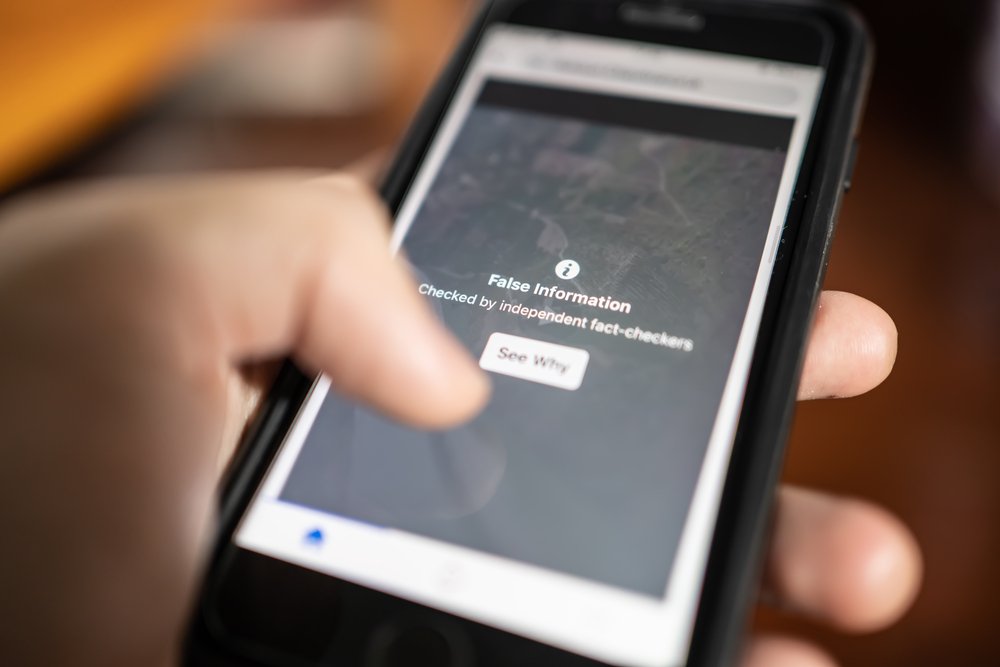

Fact-checking infrastructure also requires substantial investment. While most major platforms have implemented some form of fact-checking, these systems struggle to scale effectively against the volume of content produced daily. Experts recommend platforms dedicate more resources toward human moderation teams working in concert with improved AI tools.

User empowerment represents another promising approach. “Giving users more control over what they see could help break problematic feedback loops,” notes Samira Johnson, policy director at the Digital Rights Coalition. “Simple toggles to adjust algorithmic feeds or opt out of certain recommendation systems could significantly reduce exposure to misleading content.”

The research community has repeatedly called for better data access to study these issues independently. Despite some progress through initiatives like Meta’s Facebook Open Research and Transparency project, researchers still face substantial barriers when attempting to investigate algorithmic amplification across platforms.

While some social media companies have implemented changes—Twitter (now X) and Facebook have introduced features allowing users to switch between algorithmic and chronological feeds, for instance—critics argue these efforts remain insufficient given the scale of the problem.

The tension between business models and information integrity persists. Platforms earn revenue primarily through advertising, creating powerful disincentives to reduce engagement, even when that engagement comes through provocative or misleading content.

This reality has led many experts to conclude that meaningful change will require regulatory intervention alongside voluntary platform improvements. As digital infrastructure becomes increasingly central to civic discourse, the stakes of allowing algorithmic amplification to continue unchecked grow higher.

Without comprehensive action from both industry and policymakers, AI systems designed to maximize engagement will likely continue promoting content based on its ability to provoke reaction rather than inform—potentially undermining the information ecosystem upon which democratic societies depend.

Fact Checker

Verify the accuracy of this article using The Disinformation Commission analysis and real-time sources.

8 Comments

Intriguing how AI algorithms can inadvertently amplify disinformation. It highlights the delicate balance between tech capabilities and ethical business practices. This is a complex issue that requires nuanced solutions to protect information integrity.

This is an important topic that deserves further examination. The unintended consequences of AI-driven systems on information integrity are concerning and warrant serious consideration by industry leaders and policymakers.

The article raises valid concerns about the profit-driven incentives of social media platforms that can lead to the spread of sensationalized, inaccurate content. Striking the right balance between engagement and truthful information is crucial.

Agree, it’s a challenging issue without easy answers. Robust content moderation policies and transparent algorithmic practices could be a step in the right direction.

The article highlights a concerning trend where technology and profit motives can negatively impact the quality of information available online. I’m interested to learn more about potential solutions to address this problem.

As someone interested in the mining and energy sectors, I’m curious to see how this issue may impact the spread of information related to those industries. Maintaining factual, reliable data is crucial for informed decision-making.

Interesting to see the role of AI in amplifying disinformation. It’s a complex challenge that requires a multifaceted approach to protect the integrity of online information, especially in specialized domains like mining and energy.

Agreed. Balancing technological capabilities with ethical practices is key to ensuring accurate, reliable information is accessible to the public and industry stakeholders.