Listen to the article

Health tech giants OpenAI and Anthropic have introduced AI chatbots designed specifically for medical inquiries, raising both possibilities and concerns in the healthcare tech space, experts say.

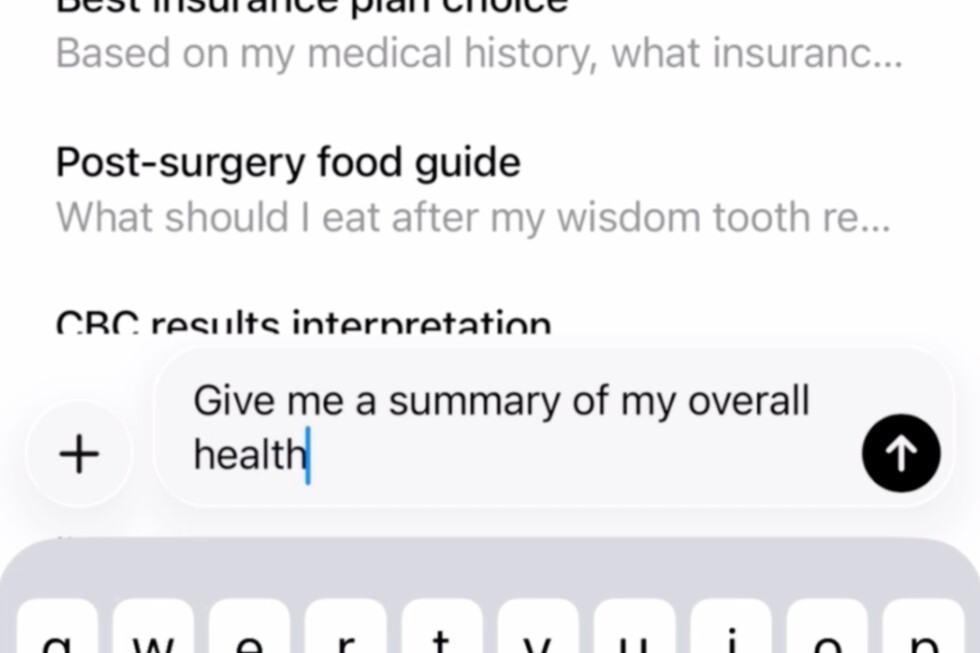

OpenAI’s ChatGPT Health, currently available through a waiting list, and similar features in Anthropic’s Claude chatbot are capable of analyzing users’ medical records, wellness app data, and information from wearable devices to provide personalized health insights.

Both companies emphasize these tools should not replace professional medical care or be used for diagnosis. Instead, they’re positioning the chatbots as supplements that can help users better understand test results, prepare for doctor’s appointments, and identify health trends hidden in their medical data.

Dr. Robert Wachter, a medical technology expert at the University of California, San Francisco, sees potential benefits in these AI health assistants. “The alternative often is nothing, or the patient winging it,” Wachter noted. “If you use these tools responsibly, I think you can get useful information.”

The latest generation of health-focused AI tools differs significantly from standard internet searches by incorporating contextual understanding of users’ medical history, including prescriptions, age, and physician notes. Even without access to formal medical records, experts recommend providing chatbots with as much relevant personal health information as possible to improve response quality.

However, medical professionals stress clear boundaries for AI health tool usage. Dr. Lloyd Minor, dean of Stanford University’s medical school, advises approaching these platforms with “a degree of healthy skepticism,” particularly for significant health decisions.

“If you’re talking about a major medical decision, or even a smaller decision about your health, you should never be relying just on what you’re getting out of a large language model,” Minor cautioned.

For urgent symptoms like chest pain, severe headaches, or breathing difficulties, experts emphasize the importance of seeking immediate medical attention rather than consulting AI.

Privacy concerns represent another significant consideration. Unlike information shared with healthcare providers, data uploaded to AI platforms isn’t protected by HIPAA, the federal law governing medical information privacy.

“When someone is uploading their medical chart into a large language model, that is very different than handing it to a new doctor,” Minor explained. “Consumers need to understand that they’re completely different privacy standards.”

Both OpenAI and Anthropic have stated that users’ health information receives additional privacy protections and is stored separately from other data. The companies claim they don’t use health information to train their AI models, and users must explicitly consent to share information and can disconnect their data at any time.

Independent research on these AI health tools remains limited, though early studies show mixed results. While AI systems have demonstrated proficiency in medical examinations, they often struggle with human interactions.

A recent Oxford University study involving 1,300 participants found that people using AI chatbots for health research didn’t make better decisions than those using standard online searches or personal judgment. The research revealed that while chatbots could correctly identify medical conditions 95% of the time when presented with comprehensive information, communication problems emerged during actual user interactions.

“The place where things fell apart was during the interaction with the real participants,” said Adam Mahdi of the Oxford Internet Institute, who led the study. Users frequently failed to provide necessary information, while the AI systems often delivered a mix of good and bad advice that users struggled to differentiate.

As this technology evolves, experts suggest comparing responses across multiple AI platforms as a form of digital “second opinion.”

“I will sometimes put information into ChatGPT and information into Gemini,” Wachter said, referring to Google’s AI tool. “And when they both agree, I feel a little bit more secure that that’s the right answer.”

The healthcare AI landscape continues to develop rapidly, with companies working to improve how chatbots interact with users to elicit critical health details. Experts anticipate these tools will become more sophisticated in their conversational abilities, potentially mirroring the back-and-forth dialogue of physician consultations.

Fact Checker

Verify the accuracy of this article using The Disinformation Commission analysis and real-time sources.

8 Comments

As AI chatbots become more advanced, the line between supplementary health tools and standalone medical advice becomes increasingly blurred. Rigorous testing, regulatory oversight, and public awareness campaigns will be essential to ensure these technologies are used safely and appropriately.

Well said. Responsible development and deployment of these AI health tools must be a top priority to protect patient wellbeing and maintain trust in the healthcare system.

I’m curious to see how the health-focused AI chatbots from OpenAI and Anthropic will evolve and be implemented. Providing personalized insights from medical data could be valuable, but ensuring patient privacy and avoiding misdiagnosis will be critical challenges.

Good point. Robust data privacy and security measures will be crucial, as will transparent guidelines on the appropriate use of these AI tools. Striking the right balance between convenience and responsible healthcare practices will be key.

Interesting development in the health tech space. While AI chatbots could provide useful health insights, it’s crucial that users understand their limitations and don’t rely on them for diagnosis or treatment. Responsible use and complementing professional medical care is key.

Agreed. These tools should be positioned as supplementary resources, not replacements for professional healthcare. Careful oversight and clear communication around their capabilities and limitations will be essential.

The emergence of AI-powered health chatbots raises important questions about the future of healthcare delivery. While they may provide valuable insights, their limitations must be clearly communicated to avoid any misunderstandings or misuse that could compromise patient safety.

While the potential benefits of AI-powered health assistants are intriguing, I share the concerns around overreliance on these tools and the need for clear boundaries. Proactive patient education and collaboration with medical professionals will be vital.