Listen to the article

Jury Deliberates in Landmark Meta Trial Over Child Safety in New Mexico

A New Mexico jury began deliberations Monday in a groundbreaking trial where social media giant Meta faces accusations of misleading users about the safety of its platforms for children. The case, heard in state court, represents one of the first social media impact lawsuits to reach trial amid a growing wave of litigation targeting tech companies.

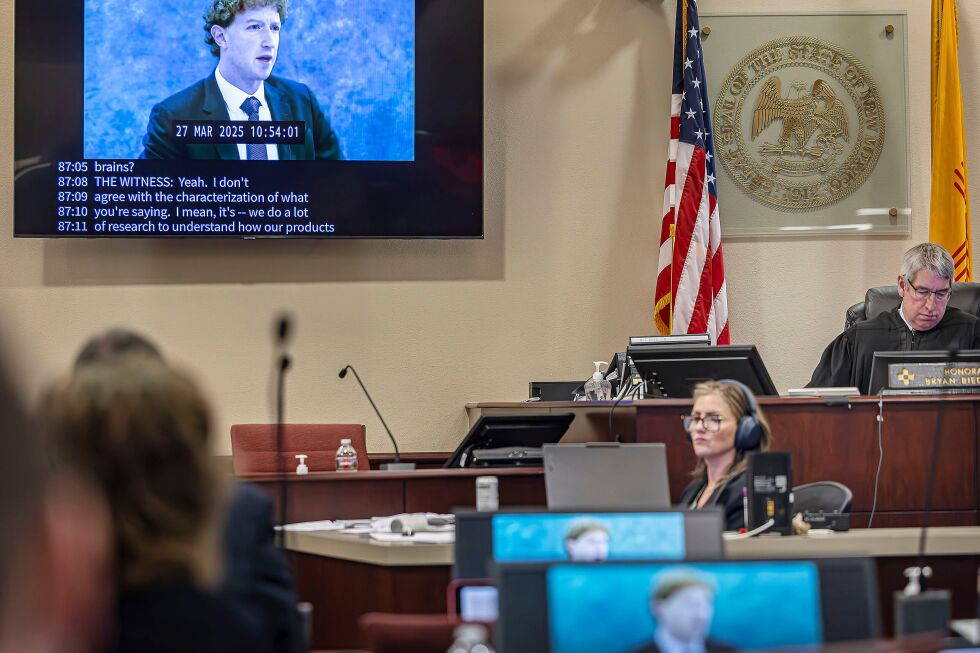

Over six weeks, jurors heard testimony from dozens of witnesses, including teachers, psychiatric experts, state investigators, Meta executives, and former employees who left the company. The trial has drawn significant attention as it could establish precedent for how tech platforms are held accountable for their impact on young users.

New Mexico prosecutors have accused Meta—owner of Instagram, Facebook, and WhatsApp—of prioritizing profits over safety in violation of state consumer protection laws. Their case centers on concerns about algorithm safety and various messaging features and settings that allegedly put children at risk.

“It’s clear that young people are spending too much time on Meta’s products, they’ve lost control,” prosecution attorney Linda Singer told jurors during closing statements. “Meta knew that and it didn’t disclose it.”

The state’s case alleges Meta’s algorithms recommended sensational and harmful content to teenagers while failing to effectively enforce its minimum user age of 13. Singer emphasized that the safety issues weren’t accidents but rather “a product of a corporate philosophy that chose growth and engagement over children’s safety.”

Meta’s defense countered these claims by highlighting the company’s substantial investments in platform safety. Attorney Kevin Huff pointed to witness testimony about Meta’s automated safety features and dedicated safety teams, noting that “Meta has 40,000 people working to make its apps as safe as possible.”

Huff acknowledged the imperfection of Meta’s systems, stating that “with billions of pieces of content every day, even the best system cannot catch all of it.” He added that U.S. government restrictions on collecting children’s data hamstring the company’s ability to enforce age limits effectively.

The defense insisted Meta has adequately disclosed the risks of its platforms through user agreements, websites, advertisements, and television. “Wherever it could get its message out, Meta was disclosing risk to the public,” Huff argued, adding that “common sense also says that parents and teens know that there is bad content on the internet, and on Facebook and Instagram specifically.”

The financial stakes are significant. Prosecutors have urged jurors to impose civil penalties that could exceed $2 billion, based on maximum penalties of $5,000 per violation across an estimated 208,700 monthly underage users in New Mexico. Huff called this request “a shocking number” and argued the state failed to provide examples of teenagers who chose to use Instagram because they misunderstood its risks.

This trial represents just the first phase of the legal proceedings. If Meta is found liable, a second phase will follow where a judge will determine whether the company created a public nuisance and should fund programs addressing alleged harms to children.

New Mexico Attorney General Raúl Torrez filed the lawsuit in 2023, accusing Meta of creating a “breeding ground” for predators targeting children and failing to disclose what it knew about these harmful effects. State investigators created fake social media accounts posing as children to document online sexual solicitations and Meta’s response.

The prosecution argued Monday that public assurances about safety from Meta executives, including founder Mark Zuckerberg and Instagram head Adam Mosseri, often contradicted internal studies and communications. “It was included in Meta’s internal research—again, research that didn’t get disclosed by Meta—one-in-three teens experienced problematic use,” Singer told jurors.

Traditionally, tech companies have enjoyed protection from liability for content posted on their platforms under Section 230 of the Communications Decency Act and First Amendment protections. However, prosecutors argue they’re not holding Meta accountable for user content but rather for how its algorithms push potentially harmful material.

The case comes as social media companies face mounting scrutiny nationwide. In California, a separate jury is already deliberating whether Meta and YouTube should be liable for harms to children using their platforms—a bellwether case that could influence thousands of similar pending lawsuits.

The New Mexico jury, drawn from residents of the politically progressive Santa Fe County, now holds significant power in determining whether one of the world’s most influential tech companies failed in its obligations to protect young users.

Fact Checker

Verify the accuracy of this article using The Disinformation Commission analysis and real-time sources.

14 Comments

The trial seems to be tackling some fundamental questions around tech companies’ responsibility for their platforms’ effects on users, especially minors. I hope the jury is able to carefully weigh the evidence and set a clear precedent.

Yes, this case could have major implications for how social media platforms are regulated and held accountable in the future. It’s an important test case to watch.

Interesting to see this case go to trial. Tech companies’ impact on kids is a growing concern that needs to be addressed. I hope the jury carefully weighs the evidence on Meta’s conduct and safety practices.

Agreed, these issues around child safety and social media platforms are complex but very important to get right. The outcome could set an important precedent.

This seems like a complex case with a lot of nuance around the tech industry’s practices and their impacts. I’ll be curious to see how the jury navigates all the evidence and testimony.

Yes, these are challenging issues without easy answers. But an impartial jury weighing the facts is an important step towards accountability and change.

This seems like a landmark case that could have wide-ranging implications. I’m curious to see how the jury rules on whether Meta prioritized profits over user safety, especially for young users.

Yes, the allegations around Meta’s algorithms and messaging features putting children at risk are quite serious. It will be telling to see what evidence the jury finds most compelling.

As someone who follows the mining and commodities space, I’m interested to see how this case unfolds. The impacts of social media on vulnerable populations like children is an important issue for all industries to grapple with.

Absolutely. Even though this case is focused on Meta, the lessons learned could have ripple effects across the tech sector and beyond.

As someone who follows the mining and energy sectors, I’m intrigued by how this case could set precedents that reverberate through the wider tech industry. The societal impacts of these platforms deserve close scrutiny.

Agreed. Even though the trial is focused on Meta, the implications could be far-reaching. It’s an important test case to watch, regardless of one’s industry focus.

As a parent, I’m very interested to see how this trial unfolds. The safety of children online is such a critical issue that needs to be addressed. Kudos to the prosecutors for taking on this landmark case.

Absolutely. Protecting vulnerable young users should be a top priority for any tech company. I hope the jury’s decision sends a clear message about the need for stronger safeguards.